Hi All

Seem to be running into scaling issues of some sort.

I have set up an environment for a company as follows:

1x Main LNMS server only handling memcached, RRDCached (>1.7) and apache. 16 cores 32GB memory

1x MariaDB server (10.2.2) running ONLY MySQL 16 cores, 16 GB memory

7 x Pollers All running with 16 cores and 16 GB memory. I have set up the polling thread on each to 64 in the crontab.

So my problem is this:

I add devices to poller_group 0 to be polled by Poller 1. This goes well, and I leave the system running for roughly an hour and the average polling time in the webui registers as ~95 seconds for its 210-odd devices.

Now I add devices to poller_group 1 to be polled by Poller 2. This still goes well, again I leave the system running for half an hour to an hour, without any errors reported, for any of the devices. Poller 1’s time goes up a bit, to about ~110 seconds, Poller 2’s time comes in at about 80 seconds, for it’s 190-odd devices.

Then the issues start. When I add the third poller, and add the 200 devices that needs to be polled by it to poller_group 2, all of a sudden devices that are supposed to be polled by Poller 1 (poller_group 0) starts reporting it has not been polled in the last 15 minutes. Which does not make sense, since those two were running fine before poller 3 was added. We have these issues with only ~600 devices added, and in the end we need to monitor 4500.

My question is, does this sound like the database is not keeping up? Or is there something I am missing? I am running a distributed system on my own environment, ~700 devices, 3 pollers, and all is well. Even on the old 5.5 version of MariaDB.

All of the virtual machines in the LNMS environment are running from the same physical server. Disk inside the machine is SSD, so I don’t think latency is an issue in the server environment.

Any ideas will be GREATLY appreciated?

Consider yourself one of the lucky few who has somehow managed to get a specific poller to poll devices in a specific group.

No documentation explains how this is done, and no answers from the community when specifically asked.

If you could even help the rest of us figure out the black magic that you used to get Poller 2 to only poll poller_group 1, it would be greatly appreciated. We may then be able to get to step 3 and even help you out when we can get basic functionality working.

Hi @jinx-lnx

Sorry for only responding now, I somehow missed the reply coming through.

I had created poller groups for my devices, based on rules. I created the groups before hand and the “intellgence” was based in a few shell scripts. Once the devices are added in a poller group, the rest was actually very easy to do.

In the config I had set the values as described here but basically the line you will need in the config is:

$config['distributed_poller_group'] = 1;

then this poller will only poll all devices belonging to poller group 1.

I hope this helps a bit?

Btw, this issue has been solved. My system is scaling as intended and I am getting the most I can from the hardware I have.

Thanks for your reply!

I think the reason I may have been experiencing issues is particularly with Docker.

None of the containers that I have tried to use will accept or recognize that argument for configuration, no matter how I try to pass the group value, the Docker instance will only poll the default poller group.

I am glad to hear that manually editing the config.php (I assume that’s how you did it?) works.

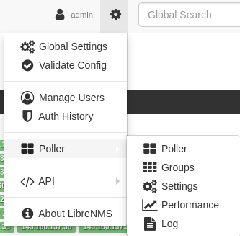

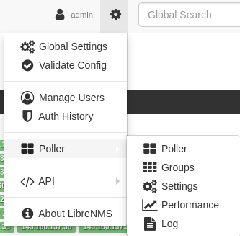

There is also a new way in the GUI to do it thanks to Murrant. Under Poller > Settings. Under there you will be able to set which poller is attached to which group etc. So have a look under Poller > Settings in Web UI.

I don’t have any such option. I can see pollers, and their logs and their performance and graphs etc. but cannot see any way to assign a poller to a group.

This is what I was referring to. You saying you don’t have that ‘Settings’ part?

Edit: Sorry for potato quality screenshot

There’s a Settings part - but where in the settings do you set a poller to a group?

I did it old school, I did it in the config.php of each poller.

$config['distributed_poller_group'] = 0;

I did it like that.

Ahh ok, cool cause yeah, could NOT find out any way to do it in the WebUI

Thanks for your replies!