Hi,

I noticed that all of our alerting broke on 4/11/2019. Th transports still work via the “test transport” button, and all of the alerts are still triggering according to the alert history but none of them will actually transport.

I have been reading fully verbose poller output but haven’t found anything that’s jumped out at me.

What could have happened? Can someone please recommend some next actions for me to take?

Thanks,

Durzzo

you can run through rule testing on cli https://docs.librenms.org/Alerting/Testing/ be sure to use the -d debug flag

Can you post output of validate.php

1 Like

Thank you so much Chas! I will run this debug action first thing in the morning!

We have some developers prepared to pick up the alerts from a syslog transport any day/hour now and it would be embarrassing for me to tell them it’s currently broken and I don’t know how to fix it.

That behind said, your constructive input is sincerely appreciated Chas. Let me know if there is something I can do for you in return for your assistance.

I will be donating to this project just as soon as I am able to move my little family into our new home. There is really value here and I just want you to know it is actually appreciated.

Kind Regards,

Durzzo

1 Like

When I run the alerts this way they transport with no problems. However, the natural rule matches I am seeing from the poller are not transporting in any way whatsoever.

The validate.php script returns no issues besides the altered .svg files and the daily.sh script ran ok as well.

All alerting is still broken and only works when manually running the alert.php script manually.

Note: I have been running on the daily updates which leads me to believe an update on 4/11 has caused the issue. I’ve now modified config.php to keep us on the monthly stable release but our alerts are still broken.

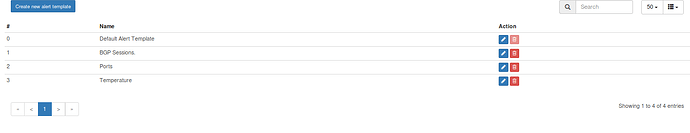

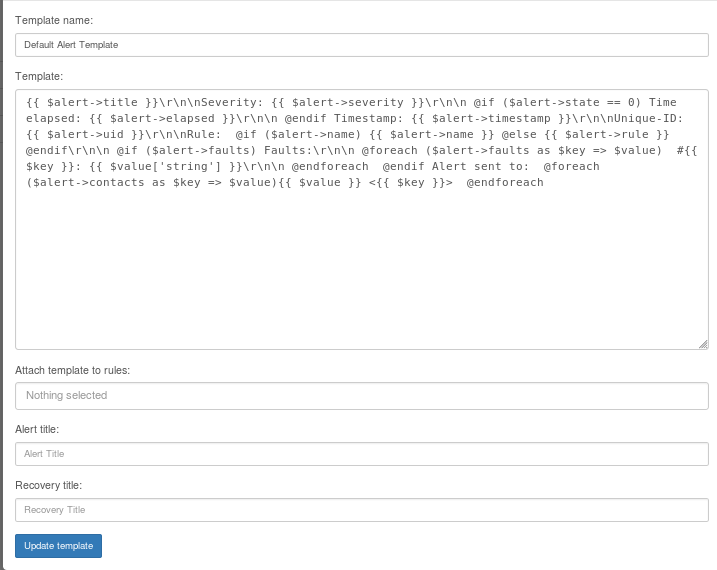

Most likely you have an error in your alert templates. Make sure you are using the new format and you dont have any syntax errors.

I’m just importing them straight from the collection except for 1 rule in particular

No not the Alerts Rules the Alert Templates.

Hi Kevin,

Thanks so much for the context. I guess I got confused because I haven’t actually done anything with the alert templates at all. I still have the default ones in place and all of our alerts worked great until 4/11.

I am also noting here that none of the default templates have ever been assigned to any alert rules.

Woops, this actually broke on 4/11… not 4/24… I tested a msteams transport on 4/24 which is what threw me off

Note:

SELECT * From eventlog,devices where eventlog.device_id = devices.device_id and eventlog.type =‘alert’ order by datetime desc

returns data only up to 4/11/2019

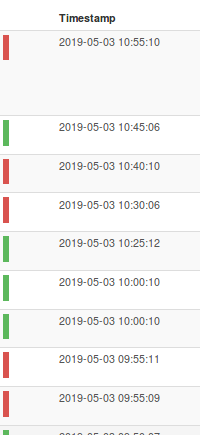

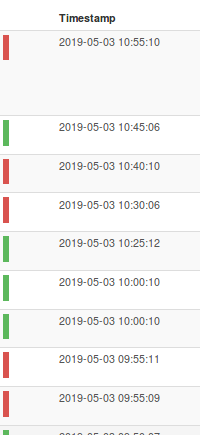

but we can clearly see here that the alerts are being triggered according to the alert history: