Sure…

and then:

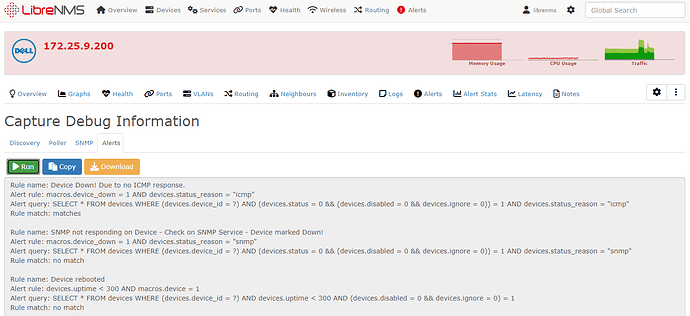

librenms@rk-librenms:~/scripts$ ./test-alert.php -h 351 -r 1 -d

SQL[SELECT alerts.id, alerts.alerted, alerts.device_id, alerts.rule_id, alerts.state, alerts.note, alerts.info FROM alerts WHERE alerts.device_id = 351 && alerts.rule_id = 1 [] 0.51ms]

SQL[SELECT alert_log.id,alert_log.rule_id,alert_log.device_id,alert_log.state,alert_log.details,alert_log.time_logged,alert_rules.rule,alert_rules.severity,alert_rules.extra,alert_rules.name,alert_rules.query,alert_rules.builder,alert_rules.proc FROM alert_log,alert_rules WHERE alert_log.rule_id = alert_rules.id && alert_log.device_id = ? && alert_log.rule_id = ? && alert_rules.disabled = 0 ORDER BY alert_log.id DESC LIMIT 1 [351,1] 0.61ms]

SQL[SELECT DISTINCT a.* FROM alert_rules a

LEFT JOIN alert_device_map d ON a.id=d.rule_id AND (a.invert_map = 0 OR a.invert_map = 1 AND d.device_id = ?)

LEFT JOIN alert_group_map g ON a.id=g.rule_id AND (a.invert_map = 0 OR a.invert_map = 1 AND g.group_id IN (SELECT DISTINCT device_group_id FROM device_group_device WHERE device_id = ?))

LEFT JOIN alert_location_map l ON a.id=l.rule_id AND (a.invert_map = 0 OR a.invert_map = 1 AND l.location_id IN (SELECT DISTINCT location_id FROM devices WHERE device_id = ?))

LEFT JOIN devices ld ON l.location_id=ld.location_id AND ld.device_id = ?

LEFT JOIN device_group_device dg ON g.group_id=dg.device_group_id AND dg.device_id = ?

WHERE a.disabled = 0 AND (

(d.device_id IS NULL AND g.group_id IS NULL AND l.location_id IS NULL)

OR (a.invert_map = 0 AND (d.device_id=? OR dg.device_id=? OR ld.device_id=?))

OR (a.invert_map = 1 AND (d.device_id != ? OR d.device_id IS NULL) AND (dg.device_id != ? OR dg.device_id IS NULL) AND (ld.device_id != ? OR ld.device_id IS NULL))

) [351,351,351,351,351,351,351,351,351,351,351] 1.45ms]

SQL[select * from `devices` where `device_id` = ? limit 1 [351] 0.52ms]

SQL[select * from `devices_attribs` where `devices_attribs`.`device_id` = ? and `devices_attribs`.`device_id` is not null [351] 0.45ms]

SQL[select * from `device_perf` where `device_perf`.`device_id` = ? and `device_perf`.`device_id` is not null order by `timestamp` desc limit 1 [351] 0.49ms]

SQL[select * from `alert_templates` where exists (select * from `alert_template_map` where `alert_templates`.`id` = `alert_template_map`.`alert_templates_id` and `alert_rule_id` = ?) limit 1 [1] 0.36ms]

SQL[select * from `alert_templates` where `name` = ? limit 1 ["Default Alert Template"] 0.34ms]

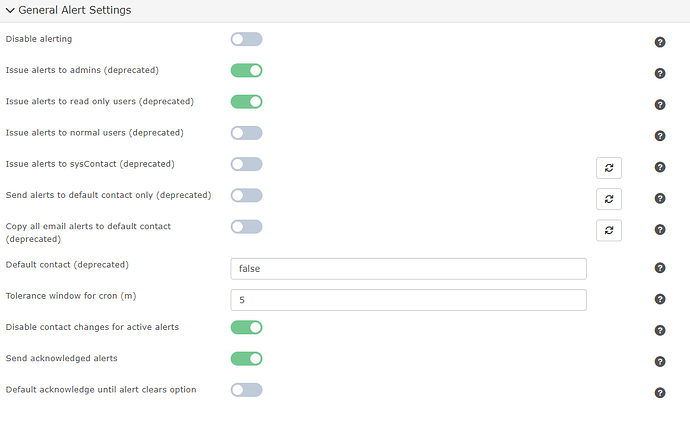

Reporting disabled by user setting

Issuing Alert-UID #20063/2:

SQL[SELECT `rule_id` FROM `alerts` WHERE `id`=? [3307] 0.36ms]

SQL[SELECT b.transport_id, b.transport_type, b.transport_name FROM alert_transport_map AS a LEFT JOIN alert_transports AS b ON b.transport_id=a.transport_or_group_id WHERE a.target_type='single' AND a.rule_id=? UNION DISTINCT SELECT d.transport_id, d.transport_type, d.transport_name FROM alert_transport_map AS a LEFT JOIN alert_transport_groups AS b ON a.transport_or_group_id=b.transport_group_id LEFT JOIN transport_group_transport AS c ON b.transport_group_id=c.transport_group_id LEFT JOIN alert_transports AS d ON c.transport_id=d.transport_id WHERE a.target_type='group' AND a.rule_id=? [1,1] 0.44ms]

:: Transport mail => SQL[select * from `alert_transports` where `alert_transports`.`transport_id` = ? limit 1 [3] 0.27ms]

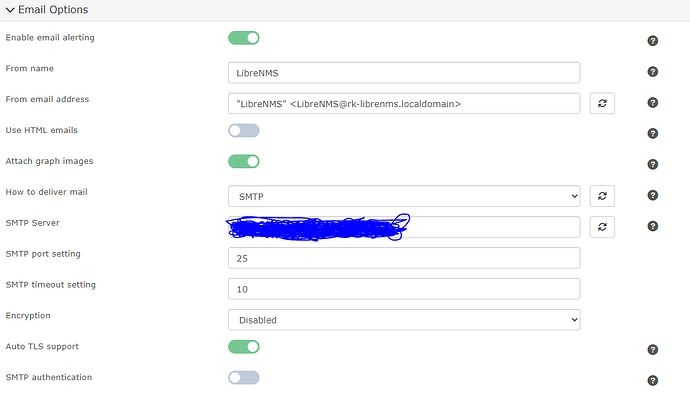

Attempting to email Alert for device 172.25.9.200 - Device Down! Due to no ICMP response. got acknowledged to: [email protected]

OKSQL[insert into `eventlog` (`reference`, `type`, `datetime`, `severity`, `message`, `username`, `device_id`) values (?, ?, ?, ?, ?, ?, ?) [null,"alert","2024-01-11 17:32:00",3,"Issued acknowledgment for rule 'Device Down! Due to no ICMP response.' to transport 'mail'","",351] 0.38ms]

:: Transport mail => SQL[select * from `alert_transports` where `alert_transports`.`transport_id` = ? limit 1 [4] 0.3ms]

Attempting to email Alert for device 172.25.9.200 - Device Down! Due to no ICMP response. got acknowledged to: [email protected]

OKSQL[insert into `eventlog` (`reference`, `type`, `datetime`, `severity`, `message`, `username`, `device_id`) values (?, ?, ?, ?, ?, ?, ?) [null,"alert","2024-01-11 17:32:00",3,"Issued acknowledgment for rule 'Device Down! Due to no ICMP response.' to transport 'mail'","",351] 0.23ms]