Dear All,

I cannot get trought this issue so a little help or suggestions would be appreciated here ![]()

We’ve been performing a partition extension and a restart on one lnms server. All went well.

After this restart we couldn’t logged in again : a deactivation of Selinux (set to permissive mode) solved the issue.

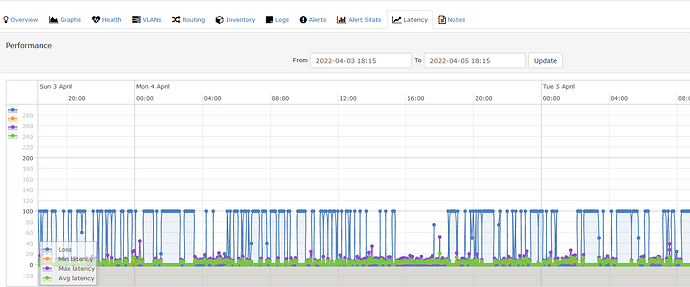

However since then we observed some polling gaps and alerts devices down for few juniper devices (os junos, SRX300). After investigations, it seems there is no network nor devices issues.

What generate those gaps and alerts is that Fping failed intermittently.

What’s suprising is that it’s not happening for all devices but only for some junos

=> When trying to launch fping directly in cli, no losses seen

[librenms-1 log]# ‘/usr/sbin/fping’ ‘-e’ ‘-q’ ‘-c’ ‘4’ ‘-p’ ‘600’ ‘-t’ ‘600’ ‘XX.XX.XX.XX’

172.22.38.133 : xmt/rcv/%loss = 4/4/0%, min/avg/max = 4.41/5.65/6.41

=> with a permanent ping running for hours from the server : no packet loss at all.

losses are seen only in cron/polling logs from impacted devices

here is an excerpt when it happens (with IP replaced by XX)

*Attempting to initialize OS: junos *

*OS initialized: LibreNMS\OS\Junos *

Hostname: XX.XX.XX.XX

Device ID: 282

OS: junos

*SQL[e[1;33mselect hostname, overwrite_ip from devices where hostname = ? limit 1 e[0;33m[“XX.XX.XX.XX”]e[0m 0.47ms] *

[FPING] ‘/usr/sbin/fping’ ‘-e’ ‘-q’ ‘-c’ ‘4’ ‘-p’ ‘600’ ‘-t’ ‘600’ ‘XX.XX.XX.XX’

*response: {“xmt”:4,“rcv”:0,“loss”:100,“min”:0.0,“max”:0.0,“avg”:0.0,“dup”:0,“exitcode”:1} *

SQL[e[1;33mselect device_groups., device_group_device.device_id as pivot_device_id, device_group_device.device_group_id as pivot_device_group_id from device_groups inner join device_group_device on device_groups.id = device_group_device.device_group_id where device_group_device.device_id = ? e[0;33m[282]e[0m 0.8ms] *

*SQL[e[1;33mselect exists(select * from alert_schedule where (start <= ? and end >= ? and (recurring = ? or (recurring = ? and ((time(start) < time(end) and time(start) <= ? and time(end) > ?) or (time(start) > time(end) and (time(end) <= ? or time(start) > ?))) and (recurring_day like ? or recurring_day is null)))) and (exists (select * from devices inner join alert_schedulables on devices.device_id = alert_schedulables.alert_schedulable_id where alert_schedule.schedule_id = alert_schedulables.schedule_id and alert_schedulables.alert_schedulable_type = ? and alert_schedulables.alert_schedulable_id = ?) or exists (select * from device_groups inner join alert_schedulables on device_groups.id = alert_schedulables.alert_schedulable_id where alert_schedule.schedule_id = alert_schedulables.schedule_id and alert_schedulables.alert_schedulable_type = ? and alert_schedulables.alert_schedulable_id in (?, ?)))) as exists e[0;33m[“2022-04-04T16:59:55.389187Z”,“2022-04-04T16:59:55.389187Z”,0,1,“16:59:55”,“16:59:55”,“16:59:55”,“16:59:55”,“e[41m%”,“device”,282,“device_group”,3,40]e[0m 1.08ms] *

*SQL[e[1;33mINSERT IGNORE INTO device_perf (xmt,rcv,loss,min,max,avg,device_id,timestamp,debug) VALUES (:xmt,:rcv,:loss,:min,:max,:avg,:device_id,NOW(),:debug) e[0;33m{“xmt”:4,“rcv”:0,“loss”:100,“min”:0,“max”:0,“avg”:0,“device_id”:282,“debug”:“[]”}e[0m 1.65ms] *

UnpingableSQL[e[1;33mUPDATE devices set status=?,status_reason=? WHERE device_id=? e[0;33m[“0”,“icmp”,282]e[0m 1.65ms]

We tried to deactivate Selinux completely but it did not improved the behavior.

./validate.php

| Component | Version |

|---|---|

| LibreNMS | 21.6.1-4-gd62bf9e3f |

| DB Schema | 2021_25_01_0127_create_isis_adjacencies_table (210) |

| PHP | 7.4.20 |

| Python | 3.6.8 |

| MySQL | 10.5.10-MariaDB |

| RRDTool | 1.7.2 |

| SNMP | NET-SNMP 5.7.2 |

| ==================================== |

[OK] Composer Version: 2.3.3

[OK] Dependencies up-to-date.

[OK] Database connection successful

[OK] Database schema correct

[INFO] Detected Python Wrapper

[OK] Connection to memcached is ok

[WARN] Your local git contains modified files, this could prevent automatic updates.

[FIX]:

You can fix this with ./scripts/github-remove

Modified Files:

config.php

Have one you ever faced those kind of issues ?

Could suggest alternative tests ?

Thanks

Simba