Hi , guys

My lnms platform was working great until few weeks ago.

I noticed some gaps on all existing graphs.

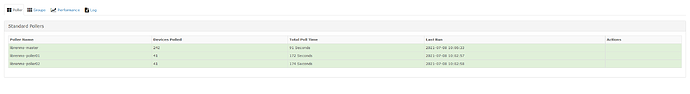

I also noticed the message “Polling took longer than 5 minutes! This will cause gaps in graphs.” listed very often in event messages.

There are two main responsable devices that brings a lot of data and are constantly mentioned in above messages.

So …

Is there a proper way to debug this?

First , I would look for a hardware bottleneck.

Cpu , memmory and storage seems ok when looking at localhost kpis.}

Since it is a vm running on proxmox box , I also checked vm parameters from proxmox panel and everithing seems to be ok.

Other idea ? Please provide.

Second, I would try loking into the platform.

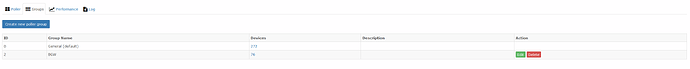

a ) Already tryed duplicating all poller workers on “global settings->poller->distributed pollers”.

But nothing changed.

Is ok doing this ?

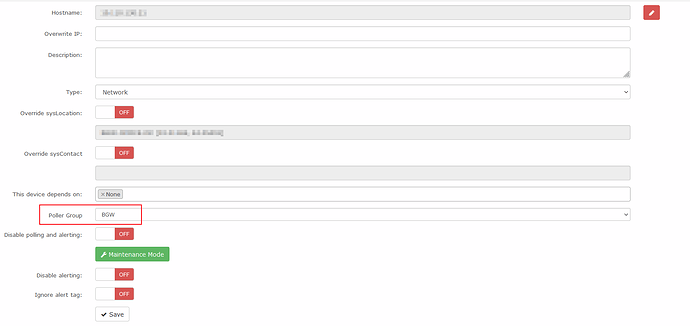

Is it possible to assign certain amount of dedicated pollers to specific device ?

1 remove unused data.

I will analize problematic devices and try to avoid get unused data , and disable unuded poller modules.

3 change polling time:

This is last option I would like to try .

For me, it is ok ot use 5 mins as default poller time.

I can increase this to 10mins , and perhaps gaps will disapear , but … prefer to keep using 5 mins.

Ok … Any idea , debuging this would be wellcome.

This is my validate.php output.

bash-4.2$ ./validate.php

====================================

Component | Version

--------- | -------

LibreNMS | 21.6.0-16-g131f5c7

DB Schema | 2021_06_07_123600_create_sessions_table (211)

PHP | 7.3.27

Python | 3.6.8

MySQL | 10.5.9-MariaDB

RRDTool | 1.4.8

SNMP | NET-SNMP 5.7.2

====================================

[OK] Composer Version: 2.1.3

[OK] Dependencies up-to-date.

[OK] Database connection successful

[OK] Database schema correct